The Future of Human Agency

Author: Stephen Downes

Source

My response to the Pew/Elon University Survey.

By 2035, will smart machines, bots and systems powered by artificial intelligence be designed to allow humans to easily be in control of most tech-aided decision-making that is relevant to their lives?

This question can be interpreted multiple ways: Could there be any technology that allows people to be in control, will some such technology exist, and will most technology be like that? My response is that the technology will exist. It will have been created. But it is not at all clear that we will be using it.

There will definitely be decisions out of our control, for example, whether we are allowed to purchase large items on credit. These decisions are made autonomously by the credit agency, which may not use autonomous agents. If the agent denies credit, there is no reason to believe that a human could, or even should, be able to override this decision.

A large number of decisions like this about our lives are made by third parties and we have no control over them, for example, credit ratings, insurance rates, criminal trials, applications for employment, taxation rates. Perhaps we can influence them, but they are ultimately out of our hands.

But most decisions made by technology will be like a simple technology, for example, a device that controls the temperature in your home. It could function as an autonomous thermostat, setting the temperature based on your health, on external conditions, on your finances and the on cost of energy. The question boils down to whether we could control the temperature directly, overriding the decision made by the thermostat.

For something simple like this, the answer seems obvious: Yes, we would be allowed to set the temperature in our homes. For many people, though, it may be more complex. A person living in an apartment complex, condominium or residence may face restrictions on whether and how they control the temperature.

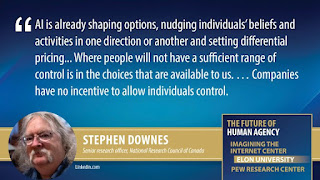

Most decisions in life are like this. There may be constraints such as cost, but generally, even if we use an autonomous agent, we should be able to override it. For most tasks, such as shopping for groceries or clothes, choosing a vacation destination, or electing videos to watch, we expect to have a range of choices and to be able to make the final decisions ourselves. Where people will not have a sufficient range of control, though, is in the choices that are available to us. We are already seeing artificial intelligences used to shape market options to benefit the vendor by limiting the choices the purchaser or consumer can make.

For example, consider the ability to select what things to buy. In any given category, the vendor will offer a limited range of items. These menus are designed by an AI and may be based on your past purchases or preferences but are mostly (like a restaurant’s specials of the day) based on vendor needs. Such decisions may be made by AIs deep in the value chain; market prices in Brazil may determine what’s on the menu in Detroit.

Another common example is differential pricing. The price of a given item may be varied for each potential purchaser based on the AI’s evaluation of the purchaser’s willingness to pay. We don’t have any alternatives – if we want that item (that flight, that hotel room, that vacation package) we have to choose between the prices we the vendors choose, not all prices that are available. Or if you want heated seats in your BMW, but the only option is an annual subscription – really.

Terms and conditions may reflect another set of decisions being made by AI agents that are outside our control. For example, we may purchase an e-book, but the book may come with an autonomous agent that scans your digital environment and restricts where and how your e-book may be viewed. Your coffee maker may decide that only approved coffee containers are permitted. Your car (and especially rental cars) may prohibit certain driving behaviours.

All this will be the norm, and so the core question in 2035 will be: What decisions need (or allow) human input? The answer to this, depending on the state of individual rights, is that they might be vanishingly few. For example, we may think that life and death decisions need human input. But it will be very difficult to override the AI even in such cases. Hospitals will defer to what the insurance company AI says, judges will defer to the criminal AI, pilots like those on the 737 MAX cannot override and have no way to counteract automated systems. Could there be human control over these decisions being made in 2035 by autonomous agents? Certainly, the technology will have been developed. But unless the relation between individuals and corporate entities changes dramatically over the next dozen years, it is very unlikely that companies will make it available. Companies have no incentive to allow individuals control.